Answered: Your Most Burning Questions about Machine Learning Chatbot

페이지 정보

작성자 Meri Lenz 댓글 0건 조회 17회 작성일 24-12-10 11:17본문

We see the very best outcomes with cloud-primarily based LLMs, as they are currently more highly effective and simpler to run compared to open supply options. But local and open supply LLMs are enhancing at a staggering fee. As part of our Open Home values, we believe users personal their very own information (a novel idea, we know) and that they can choose what happens with it. You need to use this in Assist (our voice assistant) or work together with brokers in scripts and automations to make selections or annotate data. Home Assistant presently presents two cloud LLM suppliers with numerous model options: Google and OpenAI. Last January, the most upvoted article on HackerNews was about controlling Home Assistant using an LLM. Which means that using an LLM to generate voice responses is presently either expensive or terribly slow. Innovations like voice recognition integration are already making waves by enhancing actual-time communication capabilities during virtual meetings or international conferences without language obstacles getting in the way. As VR and AR applied sciences proceed to evolve AI-powered instruments could incorporate these immersive experiences into internal communication. All this makes Home Assistant the right basis for anyone trying to build powerful AI-powered solutions for the smart house - something that's not attainable with any of the other big platforms.

As we've got researched AI (extra about that under), we concluded that there are presently no AI-powered options but which might be price it. Read more about our approach, how you should use AI right now, and what the future holds. Sam will possible be capable to handle many of the extra menial duties in Siri’s arsenal at some point, but for now, in its prototype type, it's primarily geared in the direction of gamer related queries and Ubisoft titles. To make it a bit smarter, AI companies will layer API access to other services on prime, allowing the LLM to do arithmetic or combine internet searches. This degree of responsiveness helps corporations keep ahead of their competition and deliver better customer experiences. Empowering our users with actual control of their homes is part of our DNA, and helps cut back the impact of false positives caused by hallucinations. One of the most important advantages of large language models is that because it is educated on human language, you management it with human language. The current wave of AI hype evolves round massive language fashions (LLMs), which are created by ingesting enormous quantities of information.

As we've got researched AI (extra about that under), we concluded that there are presently no AI-powered options but which might be price it. Read more about our approach, how you should use AI right now, and what the future holds. Sam will possible be capable to handle many of the extra menial duties in Siri’s arsenal at some point, but for now, in its prototype type, it's primarily geared in the direction of gamer related queries and Ubisoft titles. To make it a bit smarter, AI companies will layer API access to other services on prime, allowing the LLM to do arithmetic or combine internet searches. This degree of responsiveness helps corporations keep ahead of their competition and deliver better customer experiences. Empowering our users with actual control of their homes is part of our DNA, and helps cut back the impact of false positives caused by hallucinations. One of the most important advantages of large language models is that because it is educated on human language, you management it with human language. The current wave of AI hype evolves round massive language fashions (LLMs), which are created by ingesting enormous quantities of information.

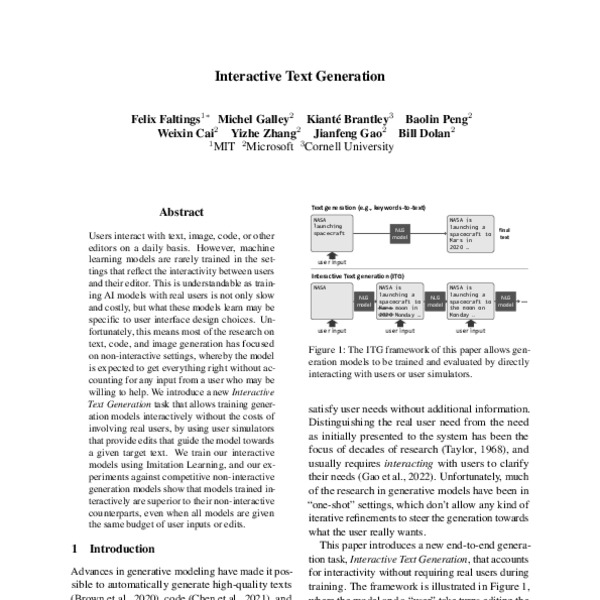

Natural Language Generation (NLG) is a department of AI that focuses on the automated era of human-like language from knowledge. Induced microglia and auditory temporal processing in charges: a mannequin for language impairment? The current API that we offer is only one strategy, and depending on the LLM mannequin used, it may not be the most effective one. Another draw back is that relying on the AI model and شات جي بي تي where it runs, it may be very sluggish to generate a solution. Probably the greatest issues you are able to do for your self and your kitchen is to pick a contractor who has lots of expertise with kitchen design. I commented on the story to share our pleasure for LLMs and the issues we plan to do with it. In response to that comment, Nigel Nelson and Sean Huver, two ML engineers from the NVIDIA Holoscan group, reached out to share some of their expertise to help Home Assistant. In this ultimate guide, we will discover the most effective practices for getting essentially the most out of your interactions with AI. Because it doesn’t know any better, it is going to current its hallucination as the truth and it is up to the person to determine if that is correct.

If you liked this write-up and you would like to receive more info relating to machine learning chatbot kindly go to our own web site.

- 이전글Exploring the Leading Adult Video Chat Apps 24.12.10

- 다음글Guaranteed No Stress Artificial Intelligence 24.12.10

댓글목록

등록된 댓글이 없습니다.